searchnlpvoice-assistantsengineeringpythonlatencyinformation-retrieval

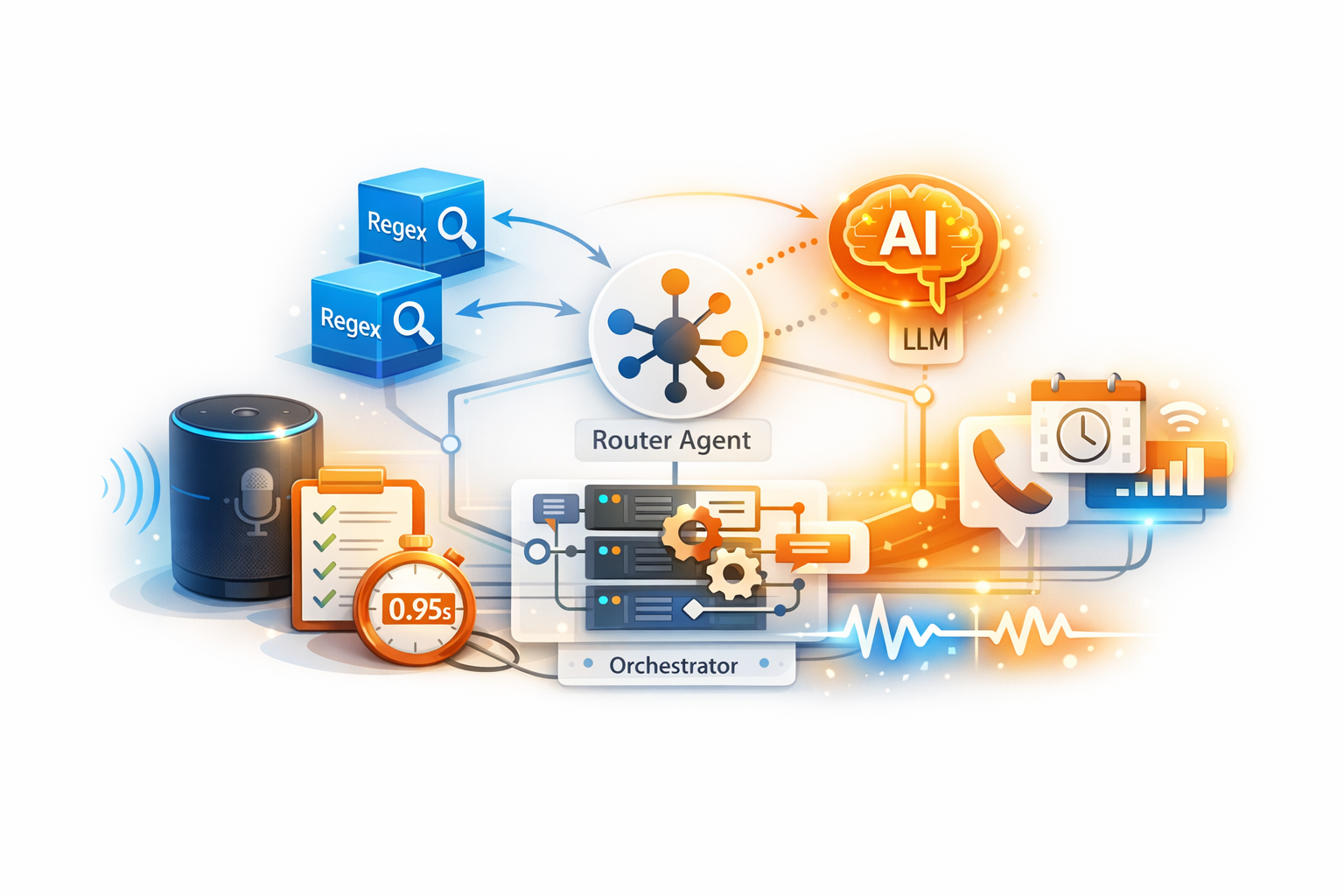

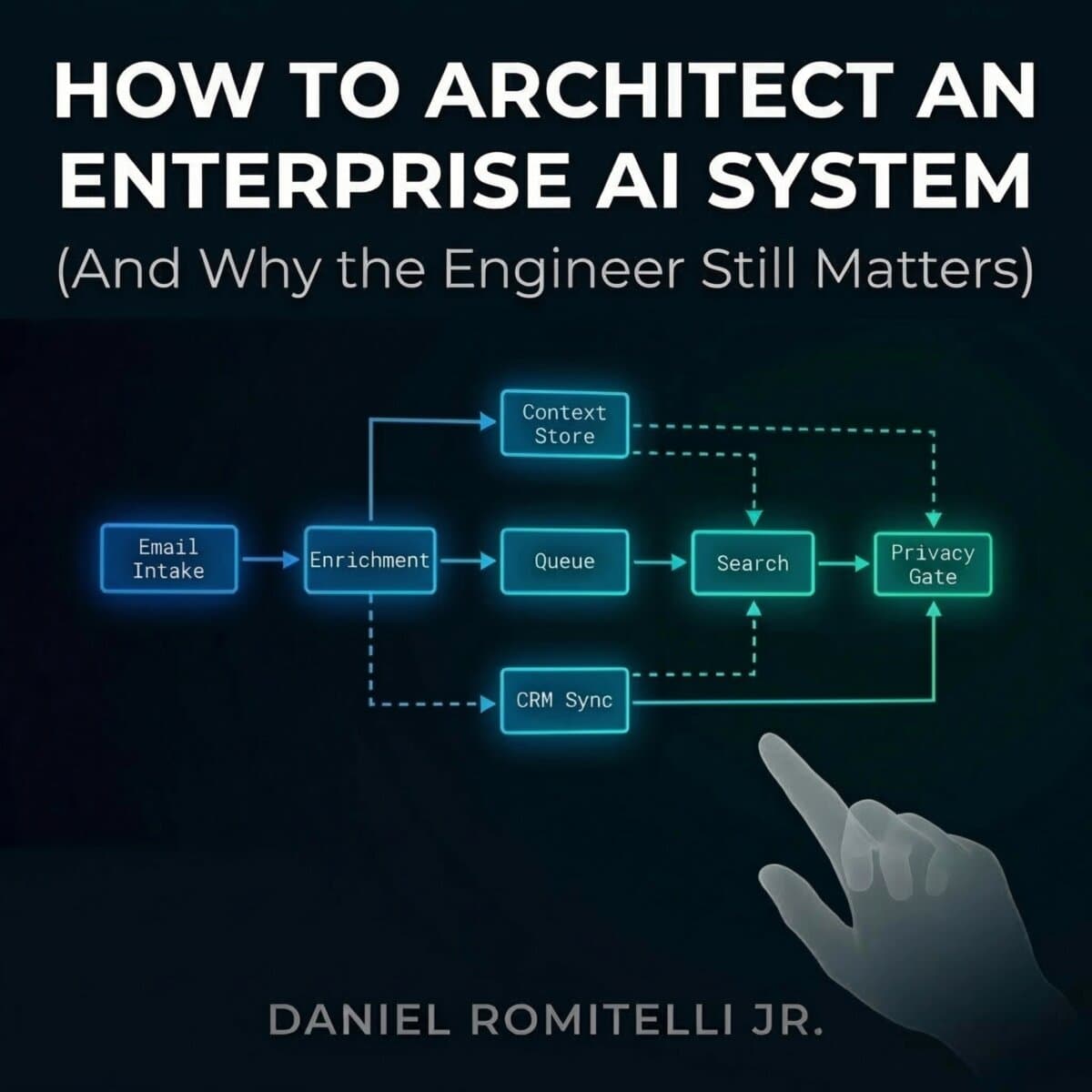

Search That Refuses to Think: The Pattern‑First Query Parser I Use for Fast Intent + Entity Extraction

In my voice-first operations product, I stopped treating “search” as retrieval and started treating it as compilation: speech → intent → entities → an executable query plan. This post documents the query parser I built after an LLM-first attempt produced latency spikes and inconsistent structure. I’ll show the intent contract, the rule engine (compiled regex + token maps), the entity extractors (locations, titles, numeric limits), caching strategy, ambiguity detection, and the benchmark harness I used to validate latency at scale.

Daniel Anthony Romitelli Jr. · March 5, 2026